Creating a Function tool

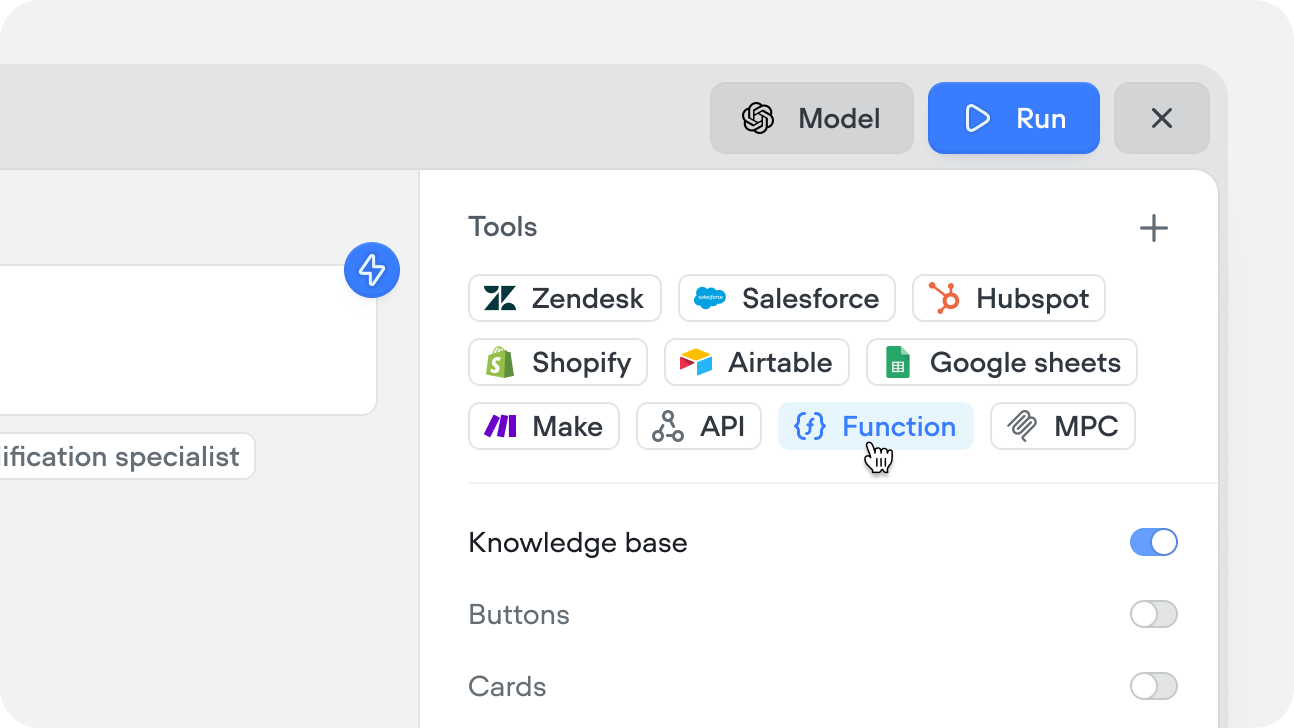

Create the tool

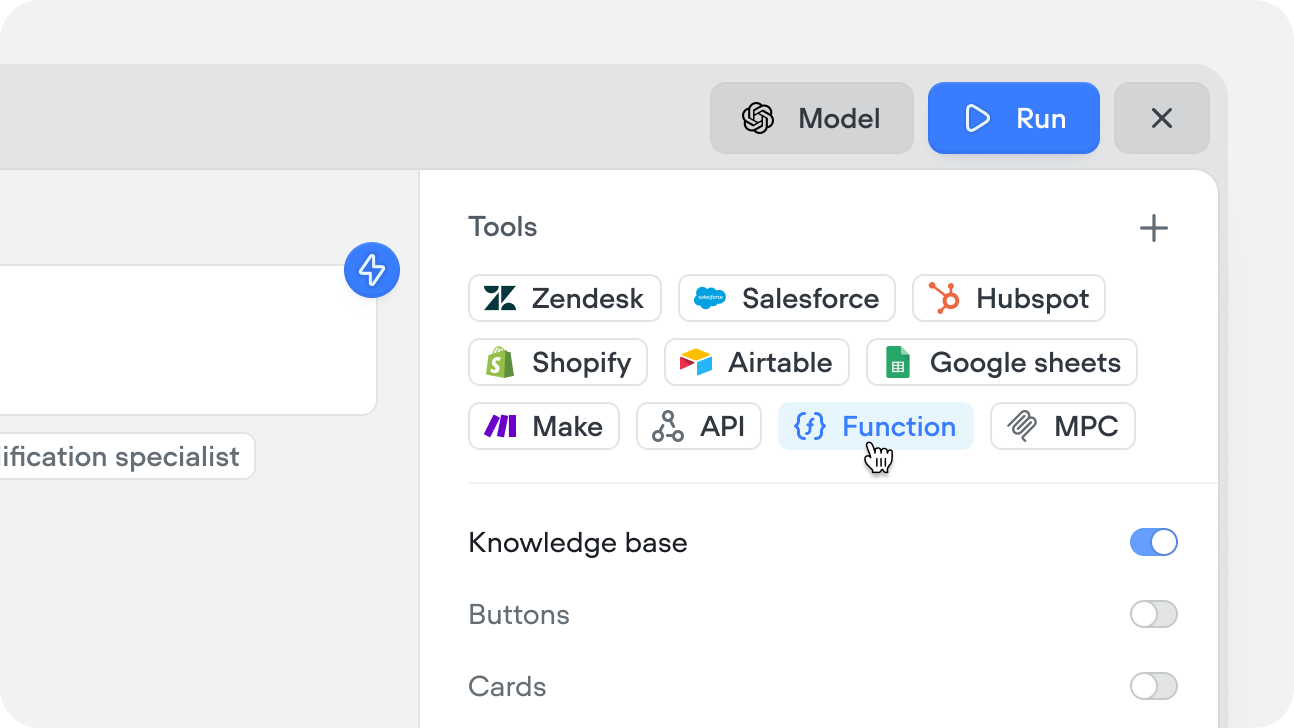

You can add, or create a Function tool directly from within a playbook.

You can also create Function tools from within a workflow using the Function step, or from the tools CMS tab.

Define input variables

Add the variables your function needs as inputs. These are passed in by a playbook or a Function step and are accessible inside your code. Add a description to each so the agent knows what to pass.

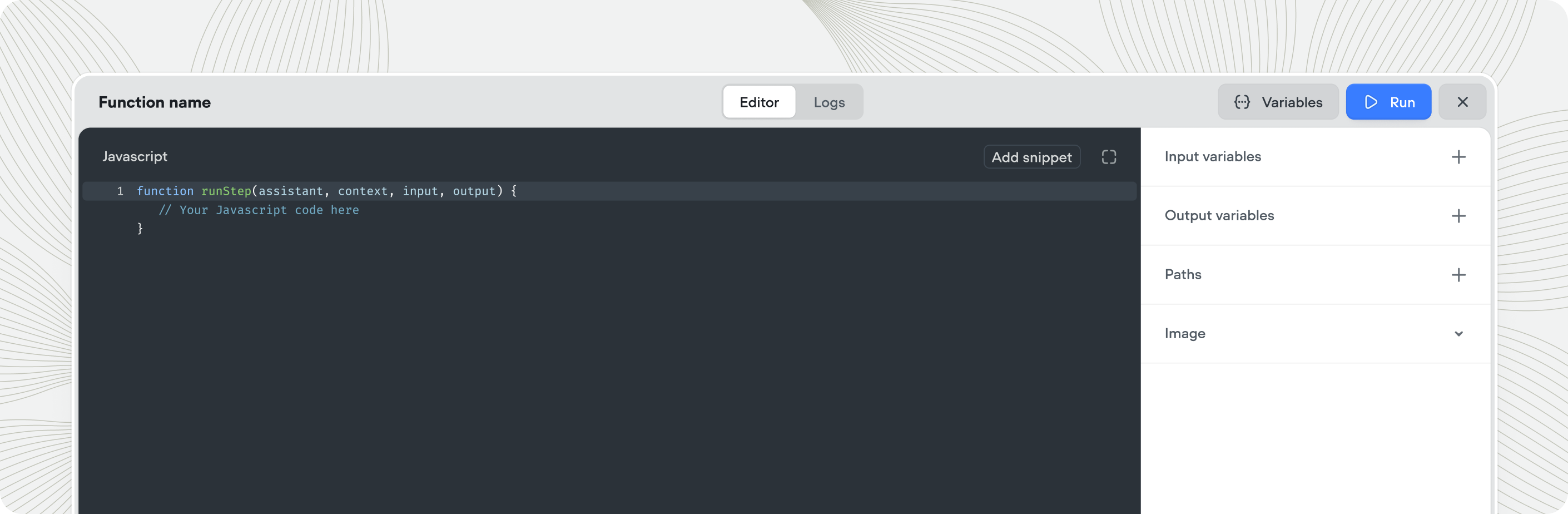

Write your function

Write your JavaScript in the code editor. Your input variables are available directly by name. Return your output by setting the output variable values — these are passed back to the conversation.

Define output variables

Add output variables for any values your function should return to the agent. These are available in the conversation after the function runs.

Using the Function tool

There are two ways to use a Function tool:In a playbook

Add the Function tool to a playbook’s Tools editor. The agent calls it autonomously when the conversation requires it — based on your playbook instructions and the tool’s name and description.

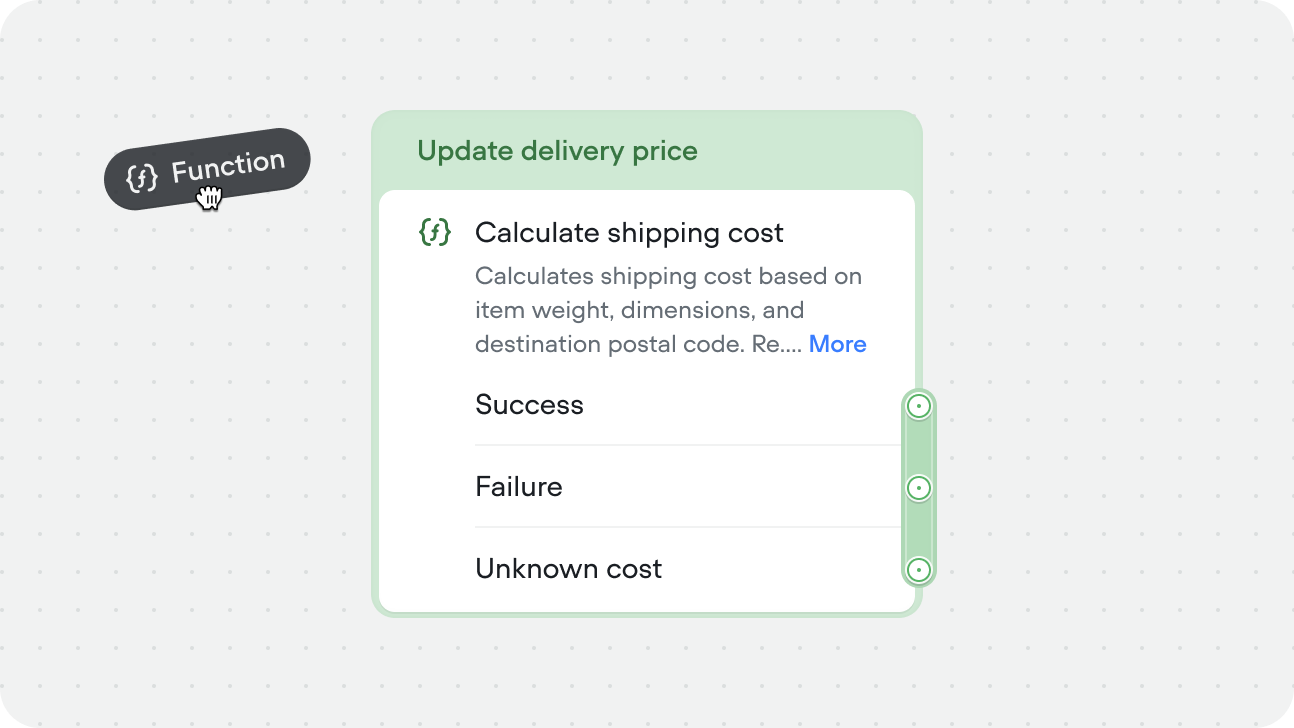

In a workflow

Drag a Function step onto the canvas and select the Function tool you want to run. Input variables are mapped explicitly in the step config — the function runs at that point in the flow every time. Use this when the logic is part of a fixed process — for example, always formatting a date before displaying it, or always calculating a total before confirming an order.

Running Function tools asynchronously

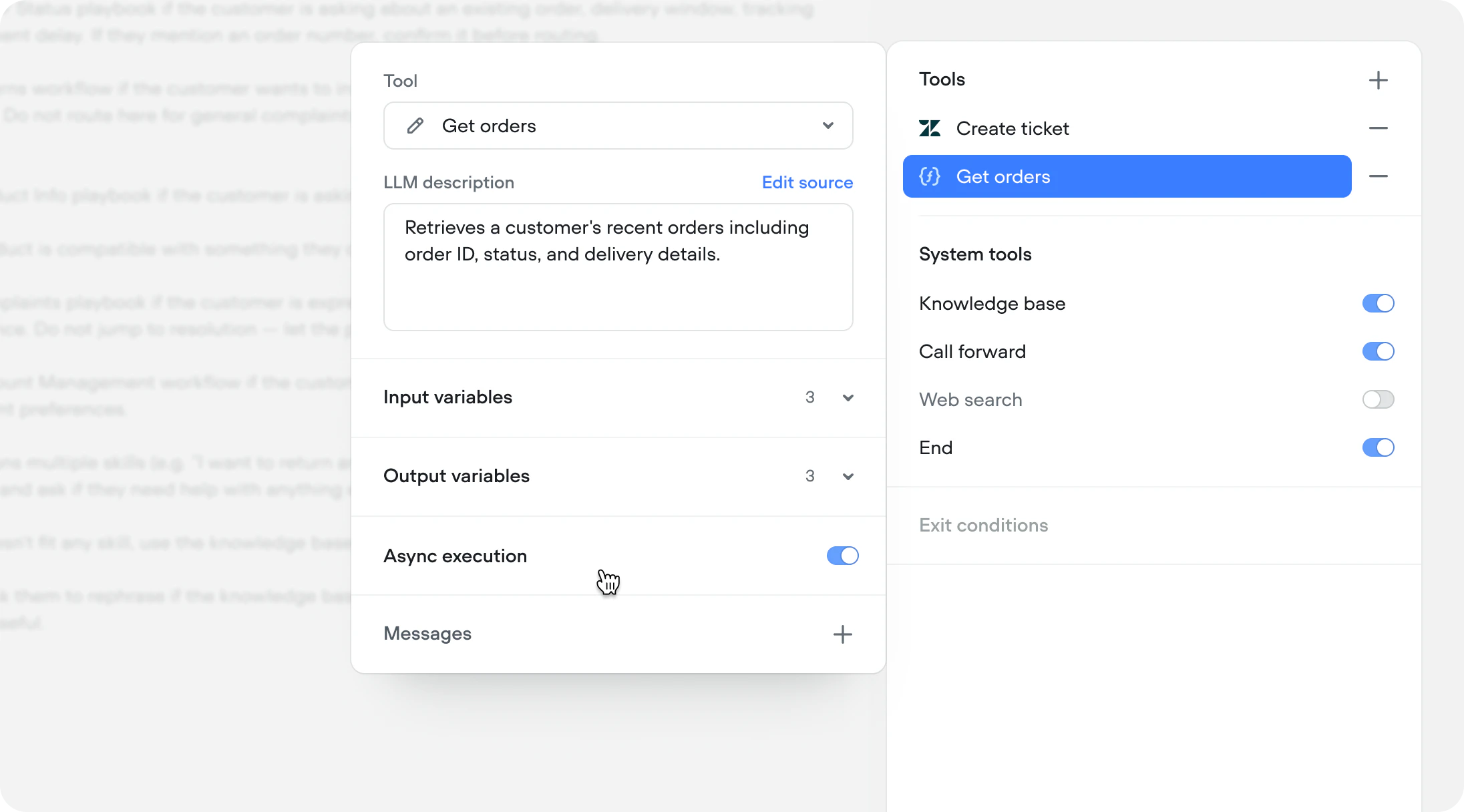

Both Function tools in workflows or playbooks can run async. The async toggle is available at the instance level, not tool level allowing you to use the same tool, with different config.

Fire and forget

Use async when you don’t need the Function response to continue the conversation. The call fires in the background and the agent moves on immediately. This is ideal for logging and side effects — sending an event to an analytics platform, writing to a CRM, triggering a webhook, or any operation where the agent doesn’t need to reference the result. The user gets a faster experience because the conversation never pauses for a background task.Async with deferred response

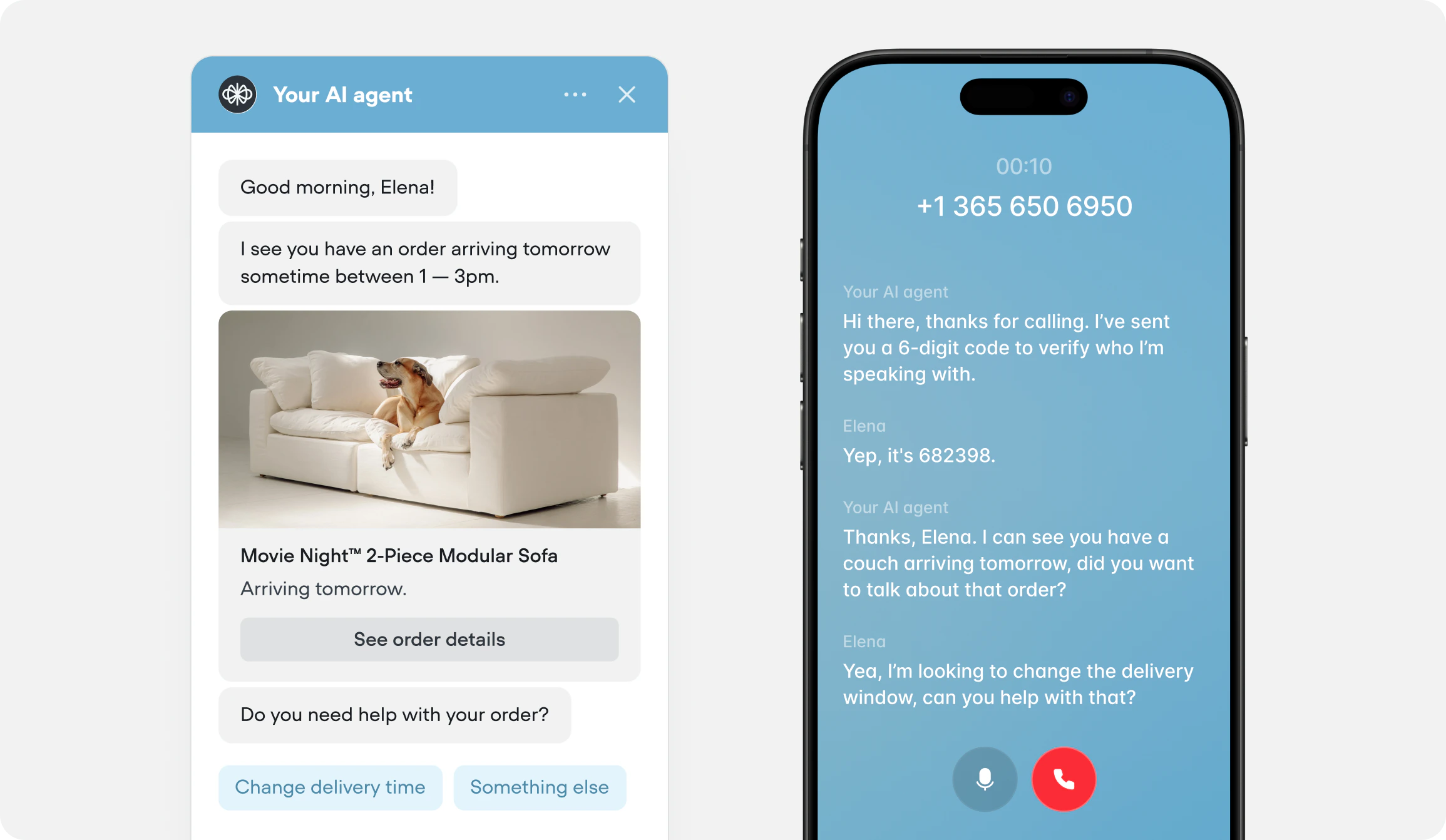

Use async when the Function call is slow or not immediately relevant, but the response still matters. The call fires in the background and the agent continues the conversation naturally. When the response arrives, it’s captured and the agent can reference it on the next turn. For example, during your initialization workflow, you authenticate the user and simultaneously fire an async call to fetch their recent orders. By the time authentication completes and the agent takes over, the order data is already available. The agent can open the conversation with “I see you have a recent order for a queen mattress arriving Thursday — is that what you’re calling about?” instead of asking the user to explain why they’re reaching out.